This system gives teams a calm, fast way to spot risk, protect trust, and turn online signals into sharper business decisions with less guesswork.

A Safe Brand Monitoring Engine works best when it is treated as an early warning layer, because brands rarely lose trust in one dramatic moment; they lose it through small signals that get ignored.

A Safe Brand Monitoring Engine helps teams catch those signals before they grow into complaints, refunds, negative reviews, or churn.

A Safe Brand Monitoring Engine also improves the way people work together, since marketing, support, sales, and leadership can act from the same stream of evidence. It gives the team a repeatable way to decide what matters first.

A Safe Brand Monitoring Engine reduces reaction time by turning scattered comments into structured priorities instead of letting volume create confusion. Speed matters, but so does confidence in the decision.

A Safe Brand Monitoring Engine makes it easier to answer one practical question: what should we do right now, and why does it matter to revenue, retention, or reputation? Shared visibility also prevents each department from creating its own version of the truth.

A Safe Brand Monitoring Engine should not feel like a noisy dashboard that shouts at everyone all day. The goal is not more alerts; the goal is better action.

A Safe Brand Monitoring Engine should feel like a decision system that points attention toward the few issues that can change outcomes. A simple interface is easier to trust than a loud one.

A Safe Brand Monitoring Engine becomes powerful when it connects listening with action, because awareness without response still leaves the customer frustrated. The goal is not more alerts; the goal is better action.

A Safe Brand Monitoring Engine supports better judgment by revealing patterns across channels rather than forcing teams to trust a single source. Patterns across sources are far more reliable than isolated comments.

A Safe Brand Monitoring Engine is most useful when it helps people move from uncertainty to a clear next step in minutes, not days. Clear next steps reduce hesitation and protect momentum.

Core signals and customer psychology

A Safe Brand Monitoring Engine should begin with customer psychology, because people rarely say exactly what they mean when they are disappointed, confused, or ready to leave. When the emotional layer is ignored, teams respond too late or in the wrong tone.

Inside a Safe Brand Monitoring Engine, the first job is to define emotional patterns, such as urgency, frustration, relief, curiosity, and trust, so every mention is read in context. This context helps people choose the right response instead of the fastest one.

A strong Safe Brand Monitoring Engine helps teams notice the difference between an annoyed comment and a sign of deeper product mismatch. That distinction keeps response teams from confusing irritation with true danger.

When a Safe Brand Monitoring Engine is designed well, it separates temporary noise from durable risk and prevents teams from overreacting to every sharp sentence. Without that filter, every critical post looks like a crisis.

The best Safe Brand Monitoring Engine setups turn emotion into a practical signal, because emotion often predicts behavior before a metric does. Behavior is often the hidden layer behind visible language.

Leaders use a Safe Brand Monitoring Engine to understand not just what people said, but how strongly they said it and how widely the message could travel. Leadership needs both the quote and the likely consequence.

A mature Safe Brand Monitoring Engine makes room for nuance, since a complaint from one power user may matter more than ten generic compliments. Nuance protects the team from chasing the wrong signals.

What the system should capture

| Component | What it captures | Why it matters |

|---|---|---|

| Source | Reviews, social posts, support notes, calls | Shows where trust is being built or damaged |

| Tone | Positive, negative, neutral, mixed | Reveals emotional direction, not just volume |

| Topic | Product issue, pricing, support, brand, feature request | Helps route work to the right owner |

| Reach | How visible the message is | Shows urgency and possible spread |

| Timing | When the message appeared and how it changed | Helps spot spikes, patterns, and sudden shifts |

| Outcome | Response sent, issue resolved, escalation needed | Connects insight to action and learning |

Where the data should come from

A Safe Brand Monitoring Engine should collect data from the channels where trust is actually built or broken, including review sites, social platforms, communities, support desks, and calls. Channel selection should reflect where your customers are most willing to speak honestly.

That same Safe Brand Monitoring Engine becomes more valuable when it recognizes source quality, because a public complaint may need a faster response than a private note. Source quality changes how urgently a team should react.

Teams often connect Safe Brand Monitoring Engine workflows with Real Time Brand Alerts Setup so the right people see urgent changes before they spread. Fast routing prevents delays when urgency is already rising.

A Safe Brand Monitoring Engine also benefits from clean tagging, because organized labels make pattern detection much easier across product lines, regions, and customer segments. Tagging also makes future reporting cleaner and more persuasive.

The data side of a Safe Brand Monitoring Engine should include text, timestamps, source type, reach, and outcome, not just raw mention volume. Context turns raw volume into something a team can actually use.

When a Safe Brand Monitoring Engine collects enough context, it starts to reveal whether sentiment is isolated, recurring, seasonal, or tied to a release. That extra context makes trend detection more reliable.

A strong Safe Brand Monitoring Engine makes channel coverage a strategic choice, not a random list of sources pasted into a tool. Coverage should serve decisions, not just dashboard completeness.

Alerting that people actually trust

The alert layer inside a Safe Brand Monitoring Engine should distinguish between signal and alarm, because not every spike deserves a crisis response. This keeps teams focused on risk instead of reacting to every blip.

A good Safe Brand Monitoring Engine uses thresholds that reflect business risk, customer value, and message reach instead of relying on one universal rule. Business context makes thresholds smarter and less mechanical.

Teams should tune a Safe Brand Monitoring Engine so that a small but important issue can rise above a large but harmless volume burst. The best alerts travel only when action is required.

Within a Safe Brand Monitoring Engine, alert routing matters as much as detection, because speed collapses when nobody knows who owns the next move. Ownership is what prevents costly delays during stressful moments.

Smart teams use a Safe Brand Monitoring Engine to assign different paths for support issues, product bugs, reputation attacks, and executive-level threats. Different issue types need different playbooks and different tone.

A reliable Safe Brand Monitoring Engine does not hide the why behind an alert; it includes the source, the trend line, and the reason the message crossed a threshold. Good alerts explain themselves before the reader asks why.

The moment a Safe Brand Monitoring Engine starts producing too many false positives, trust in the system falls and the team begins to ignore real danger. False positives slowly train people to ignore the system.

Reading tone, voice, and meaning

To deepen analysis, a Safe Brand Monitoring Engine should read language patterns, because word choice often exposes hesitation, enthusiasm, or disappointment. Words and phrasing often change before the customer changes behavior.

Teams can strengthen a Safe Brand Monitoring Engine by layering Sentiment and Voice Data with product context, journey stage, and customer value. Layering context makes tone analysis more actionable.

When a Safe Brand Monitoring Engine includes tone shifts, it becomes possible to spot when a neutral thread is quietly turning negative. Tone changes often reveal growing disappointment before an explicit complaint appears.

A Safe Brand Monitoring Engine should also compare what people say in public with what they tell support agents, since the two stories are often different. Public language can be polite while private language is blunt.

That contrast helps a Safe Brand Monitoring Engine uncover hidden friction that would be invisible in simple sentiment scores alone. That hidden friction is often where retention risk begins.

Good interpretation turns a Safe Brand Monitoring Engine into a map of trust, expectation, and emotional momentum across the customer lifecycle. Emotional momentum is a strong clue to future action.

The deeper the analysis, the better the Safe Brand Monitoring Engine can explain not just what changed, but why the change matters. Meaning matters more than isolated sentiment scores.

Retention, churn, and revenue protection

A Safe Brand Monitoring Engine becomes even more strategic when it helps the company predict churn before a renewal decision is made. Early warning lets the team intervene before trust is lost.

Support frustration, repeated feature requests, and low-enthusiasm language often combine inside a Safe Brand Monitoring Engine long before a customer cancels. Repeated frustration usually means a broader product or service issue.

Product teams can use a Safe Brand Monitoring Engine to detect whether the same complaint appears across many accounts or only in one segment. Segment-level pattern detection helps teams avoid one-off overreactions.

In subscription businesses, a Safe Brand Monitoring Engine can support Predicting SaaS Customer Churn by highlighting weak engagement patterns early. The earlier a team sees risk, the cheaper the rescue usually is.

The same Safe Brand Monitoring Engine can guide account managers toward the customers who need reassurance, training, or a faster fix. Proactive account management feels more human to the customer.

Sales leaders also gain value from a Safe Brand Monitoring Engine, because the same signals that warn about churn can reveal hesitation in late-stage deals. Hesitation in deals often shows up as hesitation in language.

That is why a Safe Brand Monitoring Engine is not only a reputation tool; it is also a revenue protection system. Retention and reputation often rise or fall together.

Better lead scoring and stronger pipeline quality

A Safe Brand Monitoring Engine can improve pipeline quality when it helps teams read buying intent more accurately. Intent is stronger when it is tied to real behavior, not just clicks.

Repeated positive mentions, solution-specific questions, and comparison language can all strengthen a Safe Brand Monitoring Engine signal for prospect readiness. Prospect language often reveals buying confidence and urgency.

Marketing teams often connect a Safe Brand Monitoring Engine with Behavioral Lead Scoring Drives Better Closings because behavior is often a stronger predictor than demographics. Behavior usually outperforms static profile data in later-stage decisions.

When a Safe Brand Monitoring Engine feeds lead scoring, the sales team can focus on people who are not just active but emotionally engaged. Emotionally engaged prospects are easier to move forward.

The result is better timing, better follow-up, and fewer wasted conversations that go nowhere. Timing is one of the biggest advantages in modern sales.

A good Safe Brand Monitoring Engine does not treat every positive signal as a sale; it checks whether the person is asking about fit, pricing, urgency, or alternatives. Fit questions are more valuable than generic praise.

That distinction keeps a Safe Brand Monitoring Engine honest and prevents the team from overvaluing casual interest. Honest scoring keeps sales focused on true opportunities.

Workflow, ownership, and governance

For day-to-day use, a Safe Brand Monitoring Engine needs a simple workflow that shows who reviews, who responds, and who escalates. If ownership is unclear, the best alert can still fail.

The safest Safe Brand Monitoring Engine programs define ownership clearly so urgent messages do not disappear between teams. Clear accountability prevents confusion when the pressure is high.

Documentation matters too, because a Safe Brand Monitoring Engine becomes easier to scale when the team can see how decisions were made last time. Historical decisions become useful when they are easy to revisit.

Training should teach people how to read alerts, how to verify context, and how to avoid emotional overreaction inside a Safe Brand Monitoring Engine. Training reduces panic and improves response quality.

The best Safe Brand Monitoring Engine governance also includes review cycles so the rules stay aligned with product changes and market behavior. Review cycles keep the system aligned with reality.

Because markets shift, a Safe Brand Monitoring Engine should be checked regularly for stale triggers, outdated thresholds, and missed channels. Fresh rules stop the workflow from going stale.

Over time, a Safe Brand Monitoring Engine becomes a learning loop that improves with every incident, every save, and every resolved concern. Every resolved issue should improve the next response.

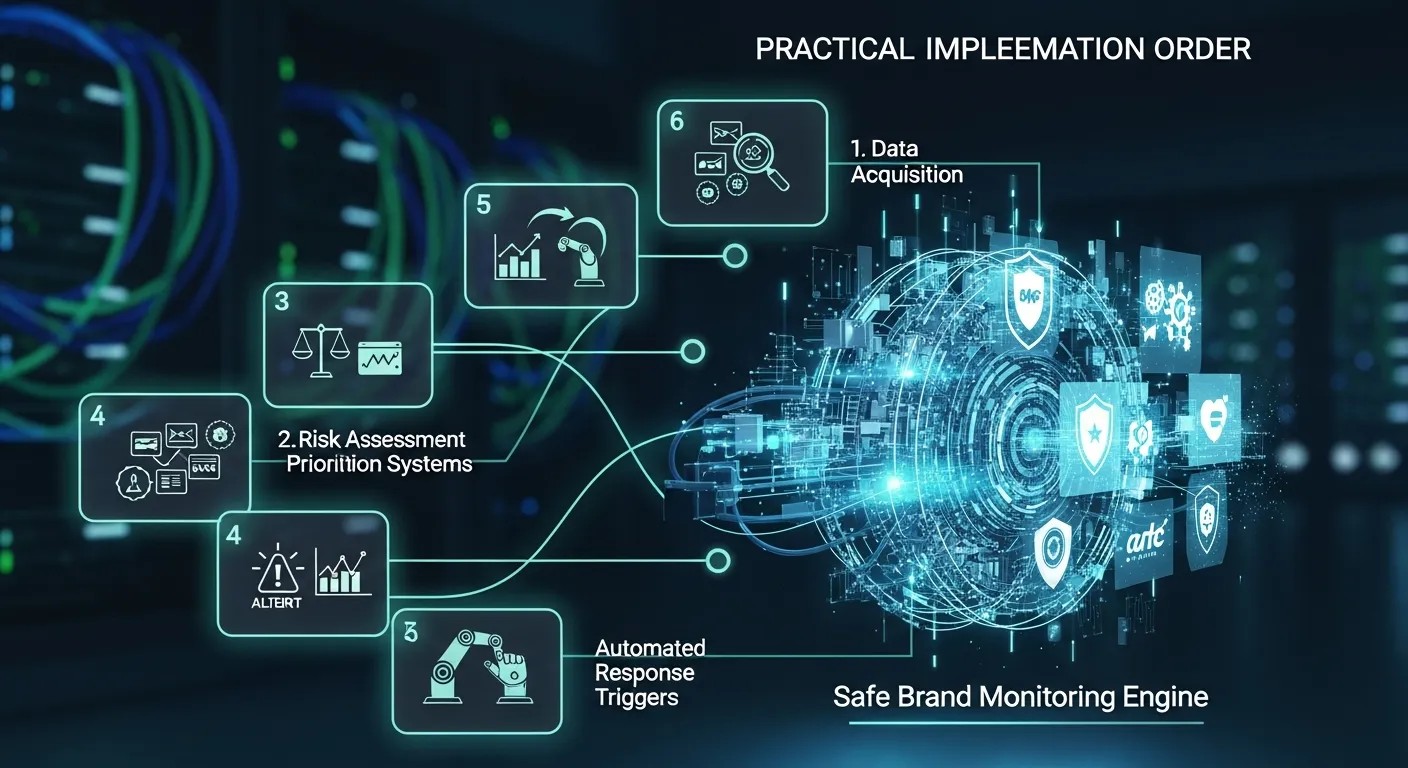

Practical implementation order

Start small and choose one critical audience segment, one high-value channel, and one response owner. A Safe Brand Monitoring Engine becomes useful faster when the first version is narrow and clear.

Then define the exact events that deserve alerts, such as sudden negative spikes, repeated complaints, product bug reports, or executive mentions. A Safe Brand Monitoring Engine should focus on patterns that can change revenue or trust.

After that, create a response playbook with two layers: routine responses and urgent escalation. The playbook gives the Safe Brand Monitoring Engine a path to action instead of just observation.

Next, assign review times so the team checks whether alerts are accurate and whether the threshold needs adjustment. A Safe Brand Monitoring Engine improves when teams treat calibration as normal maintenance.

Once the workflow stabilizes, expand coverage to more channels, more segments, and more use cases. The Safe Brand Monitoring Engine should grow only after the first loop is reliable.

The final step is shared reporting, because teams need to see not only what was detected, but what was done and what changed afterward. A Safe Brand Monitoring Engine becomes powerful when outcomes are visible.

Common mistakes to avoid

One mistake is chasing every mention as though all of them are equally important. A Safe Brand Monitoring Engine works best when priority is tied to business value.

Another mistake is ignoring emotional tone and relying only on keyword matches. A Safe Brand Monitoring Engine that misses tone will often miss the real problem.

Some teams also fail by creating alerts without owners. A Safe Brand Monitoring Engine cannot create value if no one is assigned to act.

Another common problem is poor cleanup of old rules. A Safe Brand Monitoring Engine gets noisy when outdated triggers are left behind after product changes.

Teams sometimes launch with too many channels at once. A Safe Brand Monitoring Engine is easier to trust when it starts focused and expands with discipline.

The last mistake is never measuring the business result. A Safe Brand Monitoring Engine should improve response speed, retention, and decision quality, not just dashboard activity.

How to measure success

Measure both response quality and business outcomes. Good signs include fewer missed issues, faster first response times, better trend visibility, improved retention, and more consistent cross-team action.

You should also look at how often alerts lead to useful decisions rather than noise. A Safe Brand Monitoring Engine is valuable when it reduces confusion and makes priorities clearer.

If the team trusts the system and uses it to prioritize work, that is a strong sign it is doing real job. Confidence is a practical metric because teams only use what they trust.

Track whether customer pain is identified earlier, whether resolution is faster, and whether repeat complaints fall over time. A Safe Brand Monitoring Engine should make improvement visible in the numbers.

Compare before-and-after periods so you can see whether the workflow is actually shortening reaction time. A Safe Brand Monitoring Engine should compress the distance between signal and action.

The most important test is practical: does the organization catch problems earlier, respond better, and protect revenue more effectively than before? If the answer is yes, the system is working.

Conclusion

Ultimately, Safe Brand Monitoring Engine helps a brand move from reactive guessing to disciplined growth. It protects trust, shortens response time, and gives every team a shared view of what customers feel, need, and fear. Used well, it becomes a quiet advantage that improves reputation, retention, and revenue at the same time.

Frequently Asked Questions (FAQ

1) What is the main purpose of this system?

Its purpose is to help teams notice reputation risk, customer frustration, and market shifts before those signals become expensive. A strong monitoring setup does more than collect mentions. It turns conversation into action by identifying what matters, who should respond, and how urgently the team should move. That is especially useful when the brand has multiple channels and many people are speaking at once. The right process also keeps attention focused on real business impact instead of vanity metrics. When done well, it becomes a practical part of growth, not a separate reporting layer.

2) How do I decide which channels to monitor first?

Start with the places where customers already speak honestly and where reputation can spread quickly. Review sites, social platforms, community forums, support tickets, app stores, and sales calls are common starting points. The right mix depends on your audience and your product type. If you sell software, support transcripts and review sites may matter more than some public networks. If you sell consumer goods, social conversation may be faster and more emotional. The goal is not to monitor everything at once. It is to begin with the sources most likely to influence trust, retention, and referral behavior.

3) Why are alerts better than manual checking?

Manual checking is too slow when message volume rises or sentiment changes suddenly. Alerts help the team respond at the moment a trend starts, not after the damage has spread. They also make ownership clearer, because the right person can be routed to the issue immediately. That matters when a complaint needs support, a bug needs product, or a public statement needs leadership review. A good alert system reduces wasted time and helps the company react with confidence. It also protects consistency, because people are less likely to miss the same pattern repeatedly.

4) How does sentiment analysis help beyond simple monitoring?

Sentiment analysis adds context to raw mentions, which makes the system smarter and more useful. A mention count tells you how much is being said, but tone tells you whether the conversation is moving toward trust or risk. That extra layer helps teams identify frustration, excitement, hesitation, or confusion. It also helps prioritize responses because not every mention deserves the same level of attention. Combined with source and topic data, sentiment can reveal early product issues, support problems, or opportunities to improve messaging. Used well, it gives leaders a much clearer view of customer emotion.

5) Can this support retention strategy?

Yes, because retention often depends on how quickly a company notices pain and how well it responds. When customers complain publicly, repeat the same issue, or show declining enthusiasm, those signals often appear before cancellation. A monitoring workflow can surface those patterns early enough for account managers, support teams, or product owners to act. That might mean a faster fix, a better onboarding step, a training call, or a reassurance message. In many businesses, prevention is cheaper than recovery. The system is valuable because it gives the organization a chance to intervene before disappointment hardens into churn.

6) How is this useful for sales?

Sales teams benefit when conversation data reveals buying intent, hesitation, and urgency. People often signal readiness through the questions they ask, the comparisons they make, and the way they talk about fit, price, or timing. Those clues can help rank leads more intelligently and reduce time spent on weak opportunities. They also help reps tailor their follow-up so the message feels relevant rather than generic. A monitoring layer is not a replacement for CRM discipline, but it can sharpen the quality of the information sales uses every day. Better signals usually lead to better conversations.

7) What common mistakes should I avoid?

The biggest mistake is treating the system like a passive dashboard instead of a decision engine. Another common problem is setting alert thresholds too low, which floods teams with noise and lowers trust. Teams also struggle when ownership is unclear, because even a strong alert can stall if nobody knows who should act. A third mistake is ignoring context and reading every negative mention as equally important. That leads to overreaction and fatigue. The safest approach is to define priorities, test the rules, review false positives, and improve the workflow as the business changes.

8) How often should the rules be reviewed?

Review the rules regularly, especially after product changes, launches, spikes in volume, or shifts in customer behavior. A threshold that worked last quarter may be too sensitive now or not sensitive enough. The system should evolve with the market, the language customers use, and the risks the brand faces. Regular review also helps teams delete stale rules, add missing channels, and improve routing logic. A monthly or quarterly check is common, but high-growth teams may need more frequent reviews. The key is to keep the process alive so it stays useful and trusted.

9) What makes the best response workflow?

The best workflow is simple enough to follow under pressure and clear enough that nobody wonders who owns the next step. It usually starts with detection, then verification, then routing, and finally action. Each stage should have a person or team responsible for it. The workflow should also include response templates, escalation paths, and closure notes so learning is captured after the incident ends. When the process is transparent, teams move faster and make fewer mistakes. Over time, that consistency improves both customer experience and internal confidence.

10) How do I know whether the system is working?

Measure both response quality and business outcomes. Good signs include fewer missed issues, faster first response times, better trend visibility, improved retention, and more consistent cross-team action. You should also look at how often alerts lead to useful decisions rather than noise. If the team trusts the system and uses it to prioritize work, that is a strong sign it is doing real job. The most important test is practical: does the organization catch problems earlier, respond better, and protect revenue more effectively than before? If the answer is yes, the system is working.